In today’s rapidly digitizing world, tokenizasyon has emerged as a cornerstone concept in securing digital assets, enhancing financial processes, and streamlining transactional operations. For those unfamiliar, tokenizasyon refers to the process of converting sensitive data into non-sensitive equivalents, known as tokens, that can be used safely in digital systems without exposing the original information. Within the first 100 words, it is essential to highlight that tokenization not only strengthens security but also enables businesses and consumers to adopt digital platforms confidently, mitigating risks of fraud and data breaches. Increasingly, financial institutions, e-commerce platforms, and blockchain networks are integrating tokenization to manage sensitive information, enhance privacy, and comply with regulatory frameworks. By converting credit card numbers, personal identifiers, or digital assets into tokens, organizations can maintain operational efficiency while adhering to stringent security protocols.

Tokenization is not merely a technical procedure but a transformative approach to digital asset management. Experts like Marianne Liu, a cybersecurity strategist, emphasize, “Tokenization represents the future of secure digital transactions; it is the bridge between operational convenience and risk mitigation.” Similarly, Dr. Samuel Ortiz, an economist specializing in digital finance, notes, “By abstracting sensitive information, tokenization creates a resilient ecosystem where both consumers and institutions can transact without fear of compromise.” This dual emphasis on security and functionality is why tokenization has become critical across industries, influencing everything from payment processing to digital identity management. Its adoption signals a shift in how organizations approach sensitive information, prioritizing security while enabling seamless digital interaction.

What is Tokenization and How Does it Work?

Tokenizasyon is the practice of replacing sensitive data elements with unique identification symbols, or tokens, that retain essential information but are useless if intercepted. Unlike encryption, where data can be reverted to its original form with a key, tokens cannot be reverse-engineered, making them inherently secure. For example, in credit card processing, the primary account number is substituted with a token for storage or processing. Even if cybercriminals gain access to the token, it cannot be used for fraudulent transactions, effectively insulating the original data. The process relies on secure tokenization systems that generate tokens deterministically or randomly, depending on the application, while maintaining a secure mapping to the original data in a protected token vault.

Technically, tokenization involves several steps: identification of sensitive data, creation of tokens using advanced algorithms, storage of tokens in a secure repository, and implementation of token-based processes in applications. Tokenization can be applied across various digital environments, from financial transactions and healthcare records to blockchain-based assets. In each case, the objective remains the same: prevent exposure of the underlying data while preserving functionality. As cybersecurity analyst Rachel Donovan explains, “Tokenization is not a one-size-fits-all solution; it must be tailored to specific data types and operational contexts to maximize protection without disrupting workflow.” This flexibility makes tokenization a versatile tool in modern digital security.

Applications of Tokenization Across Industries

Tokenizasyon has transcended its original use in payment processing to become integral in several sectors:

- Financial Services: Tokenization is widely used to protect credit card information, streamline transactions, and enable digital wallets. Payment networks like Visa and Mastercard have integrated tokenization to reduce fraud risks.

- Healthcare: Patient records and medical identifiers are tokenized to comply with HIPAA and other privacy regulations, ensuring that sensitive health information remains confidential.

- E-Commerce: Online platforms implement tokenization to protect consumer payment data, enhancing trust and enabling faster checkouts.

- Blockchain and Digital Assets: In blockchain ecosystems, tokenization allows assets such as real estate, stocks, or digital art to be represented digitally, improving liquidity and fractional ownership capabilities.

- Identity Management: Personal identifiers, such as social security numbers and government-issued IDs, are tokenized to reduce identity theft risks and facilitate secure online verification processes.

According to industry research, organizations that implement tokenization report a 40-60% reduction in data breach risks. This statistic underscores tokenization’s value as both a preventive measure and a trust-building mechanism for digital interactions.

Tokenization vs. Encryption: Understanding the Difference

While often conflated, tokenization and encryption serve different purposes in data security. Encryption transforms data using mathematical algorithms into a ciphertext, which can be reversed with a key. Tokenization replaces sensitive information with a token that has no intrinsic value and cannot be reverted without access to a secure token vault. This distinction is crucial for organizations planning comprehensive data protection strategies.

| Feature | Tokenization | Encryption |

|---|---|---|

| Reversibility | Not reversible without token vault | Reversible with key |

| Security Focus | Data abstraction | Data transformation |

| Use Case | Payment info, digital assets, identity data | Data transmission, storage, cloud computing |

| Regulatory Compliance | HIPAA, PCI DSS | GDPR, HIPAA, other regulations |

| Performance | Reduces data footprint, minimal processing overhead | May introduce computational overhead |

Experts suggest that combining tokenization with encryption often provides an optimal approach, particularly for high-risk environments where regulatory compliance and operational efficiency are equally important. By understanding these differences, organizations can design a multi-layered security framework that maximizes protection while minimizing operational friction.

Benefits of Implementing Tokenization

Tokenizasyon provides numerous advantages for both organizations and consumers, including:

- Enhanced Security: Sensitive information is abstracted into tokens, reducing exposure and minimizing fraud risks.

- Regulatory Compliance: Many industries face strict rules on handling sensitive data; tokenization simplifies compliance.

- Operational Efficiency: Tokenization allows secure data storage and transfer without requiring encryption for every operation.

- Consumer Trust: By ensuring secure transactions, organizations build credibility and loyalty.

- Flexible Integration: Tokens can be applied to multiple systems and platforms, supporting diverse applications without extensive redesigns.

| Benefit | Impact |

|---|---|

| Data Breach Risk Reduction | 40–60% decrease in reported breaches |

| Regulatory Alignment | Simplifies compliance reporting and audits |

| Operational Efficiency | Faster transaction processing with secure data handling |

| Customer Trust | Increases retention and positive brand perception |

| Scalability | Supports cross-platform digital initiatives |

Dr. Amina Patel, a data privacy consultant, remarks, “Tokenization is a strategic tool that aligns business goals with privacy mandates. It transforms risk into manageable processes.” Similarly, fintech entrepreneur Lewis Graham notes, “Tokenization doesn’t just protect data—it empowers digital growth by providing a secure foundation for innovation.”

Advanced Tokenization Methods and Technologies

As organizations increasingly adopt tokenization, sophisticated methods have emerged to enhance security, scalability, and operational efficiency. One prominent technique is format-preserving tokenization (FPT), which replaces sensitive data with a token maintaining the original format. For example, a credit card number token retains its length and structure, allowing legacy systems to process transactions without extensive modifications. This approach is particularly useful in industries where infrastructure upgrades are costly or disruptive. Another method is deterministic tokenization, where the same input consistently generates the same token. Deterministic tokens support analytics and repeat verification while maintaining confidentiality, making them valuable in customer loyalty programs and recurring payment systems. In contrast, random tokenization generates unpredictable tokens, enhancing security by eliminating patterns that could be exploited by attackers.

Innovations in hardware-based tokenization are also gaining traction. Secure Enclaves and Hardware Security Modules (HSMs) are leveraged to generate and store tokens in isolated, tamper-resistant environments. These technologies ensure that even if the broader system is compromised, tokenization keys and mapping tables remain secure. Dr. Elena Markovic, a cybersecurity engineer, emphasizes, “Hardware-backed tokenization adds a layer of resilience. It transforms digital security from a reactive posture to proactive protection.” The combination of advanced tokenization methods and hardware integration allows organizations to balance security, performance, and regulatory compliance across diverse applications.

Tokenization in Blockchain and Digital Asset Management

One of the most transformative applications of tokenizasyon lies in blockchain and digital asset ecosystems. Tokenization in this context refers to representing tangible or intangible assets as digital tokens on a distributed ledger. This process enables fractional ownership, transparent transactions, and enhanced liquidity. For instance, real estate properties, art pieces, or even intellectual property can be divided into tradable digital tokens, making previously illiquid assets accessible to a broader market. In decentralized finance (DeFi), tokenized assets facilitate lending, staking, and trading without reliance on traditional intermediaries, democratizing investment opportunities globally.

Blockchain tokenization also introduces programmable capabilities through smart contracts, which automate execution based on pre-defined conditions. This feature reduces administrative overhead, mitigates fraud, and accelerates settlement processes. As blockchain strategist Diego Alvarez explains, “Tokenization on distributed ledgers is not just about security—it’s about creating a new paradigm for ownership, governance, and value exchange.” Additionally, tokenized assets can be integrated with traditional finance systems, creating hybrid models that leverage the transparency and security of blockchain while maintaining compatibility with conventional regulatory frameworks.

Tokenization Challenges and Risks

Despite its numerous advantages, tokenizasyon is not without challenges. One significant concern is the secure management of the token vault, which stores the mapping between tokens and original data. If the vault is compromised, the integrity of the tokenization system is at risk. Organizations must implement rigorous access controls, encryption, and monitoring to safeguard this critical component. Another challenge lies in regulatory compliance across jurisdictions. Different regions may have varying rules regarding tokenized data, especially when it pertains to financial transactions or personally identifiable information. Companies operating globally must navigate complex legal frameworks to ensure both security and legal compliance.

Integration complexity also presents a barrier. Legacy systems often require extensive modifications to accommodate tokenized data, especially when using advanced tokenization methods such as format-preserving tokens. Additionally, tokenization cannot address all cyber risks; it should be part of a comprehensive security strategy that includes encryption, authentication, intrusion detection, and ongoing monitoring. As cybersecurity expert Marcus Chen warns, “Tokenization is powerful, but it’s not a silver bullet. Organizations must combine it with holistic security measures to truly mitigate digital threats.” Recognizing these challenges is critical for strategic planning and sustainable implementation of tokenization systems.

Global Adoption Trends and Industry Insights

Tokenizasyon adoption is accelerating across multiple industries and regions, driven by regulatory requirements, increasing cyber threats, and digital transformation initiatives. In North America and Europe, financial institutions are leading the charge, integrating tokenization to comply with PCI DSS, GDPR, and other stringent regulations. Asia-Pacific markets are also experiencing rapid growth, particularly in fintech and e-commerce sectors, where digital payments and cross-border transactions demand enhanced security. Emerging markets, meanwhile, are exploring tokenization to improve financial inclusion, enabling secure access to digital banking services for unbanked populations.

Industry reports indicate that nearly 70% of top global banks have implemented some form of tokenization, with an emphasis on securing payment credentials and customer data. Retail and healthcare sectors are following suit, using tokenization to safeguard consumer data while maintaining operational efficiency. According to fintech consultant Anya Petrov, “Tokenization adoption is no longer optional—it is a strategic imperative. Organizations that fail to implement secure token systems risk not only regulatory penalties but also erosion of customer trust.” This trend underscores the global recognition of tokenization as both a security mechanism and a business enabler, driving innovation while protecting sensitive information.

Future Outlook and Emerging Innovations

The evolution of tokenizasyon expected to continue rapidly, fueled by technological advancements, regulatory guidance, and market demand. Future innovations are likely to focus on interoperable tokenization frameworks, allowing secure data exchange across different platforms, industries, and borders. Additionally, integration with artificial intelligence and machine learning may enhance tokenization systems by detecting anomalies in token usage, identifying fraudulent patterns, and dynamically adjusting tokenization strategies for optimal security. Another promising area is quantum-resistant tokenization, which prepares for the potential threat posed by quantum computing to traditional cryptographic methods.

Experts predict that tokenization will increasingly merge with digital identity solutions, enabling secure, user-centric authentication across financial services, healthcare, and government platforms. As cybersecurity thought leader Rafael Guzman observes, “Tokenization is evolving from a defensive mechanism into a proactive enabler of digital trust. The next decade will witness seamless, secure interactions powered by tokenized ecosystems.” Organizations that adopt forward-thinking tokenization strategies will not only protect data but also unlock new digital business models, creating opportunities for innovation and growth across multiple sectors.

FAQs

1. What is tokenizasyon, and how is it different from encryption?

Tokenizasyon is the process of replacing sensitive data with non-sensitive identifiers called tokens. Unlike encryption, which transforms data into unreadable ciphertext that can be reversed with a decryption key, tokenization creates a token that cannot be reversed without access to a secure token vault. This makes tokenization inherently secure, as the tokens are meaningless outside their intended context. While encryption focuses on transforming data, tokenization focuses on abstracting it, allowing systems to operate without exposing the original information. Many organizations combine both methods to create multi-layered security frameworks that maximize data protection.

2. Which industries benefit most from tokenization?

Tokenizasyon has broad applications across multiple sectors. Financial services use it to protect credit card information and digital wallets, healthcare employs tokenization to secure patient records, e-commerce platforms safeguard consumer payment data, and blockchain networks leverage it to represent assets digitally. Additionally, identity management systems use tokenization to protect personal identifiers, reducing the risk of identity theft. Essentially, any industry handling sensitive information can benefit from tokenization, especially those facing stringent regulatory compliance requirements like HIPAA, GDPR, or PCI DSS.

3. Is tokenization completely risk-free?

While tokenization significantly reduces data exposure and fraud risk, it is not entirely risk-free. The security of the system depends on protecting the token vault—the secure repository mapping tokens to original data. If this vault is compromised, attackers may gain access to sensitive information. Other risks include implementation errors, insufficient access controls, and regulatory compliance challenges across jurisdictions. Tokenization should be implemented alongside complementary security measures, such as encryption, monitoring, authentication, and intrusion detection, to create a robust security posture.

4. Can tokenization be applied to digital assets and blockchain?

Yes. Tokenization in blockchain ecosystems allows tangible or intangible assets, such as real estate, artwork, or intellectual property, to be represented as digital tokens. These tokens enable fractional ownership, transparent transactions, and enhanced liquidity. Through smart contracts, tokenized assets can automate processes like payments, transfers, or ownership verification. This method opens new investment opportunities, reduces reliance on intermediaries, and fosters global access to previously illiquid markets. Tokenization is a foundational component in the emerging Decentralized Finance (DeFi) ecosystem.

5. What are the main types of tokenization?

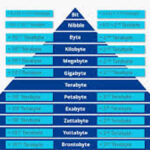

Tokenization can be categorized based on its technical method and application:

- Format-preserving tokenization (FPT): Retains the original data format, allowing legacy systems to operate without modifications.

- Deterministic tokenization: Generates the same token for repeated inputs, useful for analytics and recurring verification.

- Random tokenization: Produces unpredictable tokens, maximizing security by eliminating detectable patterns.

- Hardware-backed tokenization: Uses secure modules like HSMs or Secure Enclaves to create and store tokens in isolated, tamper-resistant environments.